Shanghai, China – While existential worries about artificial intelligence dominate discussions in Western tech circles, China is taking a decidedly more pragmatic approach as it rapidly advances its AI capabilities.

The contrast was apparent at China’s headline World AI Conference held in Shanghai last July. While forums in the West, like November’s UK AI Safety Summit, zeroed in on potentially catastrophic risks from advanced AI, the Shanghai agenda focused on practical applications like manufacturing, logistics and energy.

According to Nina Xiang, managing director at venture firm TH Capital, this pragmatic view reflects China’s national objectives as well as historical attitudes toward technology as tools for governance and competitiveness.

“One thing that did not feature on the agenda in Shanghai was worries that advanced AI could spiral catastrophically out of control, perhaps leading to human extinction,” Xiang wrote in a recent commentary.

She argues that while Western thinkers like Elon Musk obsess over hypothetical doomsday scenarios like ‘Clippy’ run amok, China sees AI primarily as ameans to boost its geopolitical standing.

China Views AI as Key to Revival

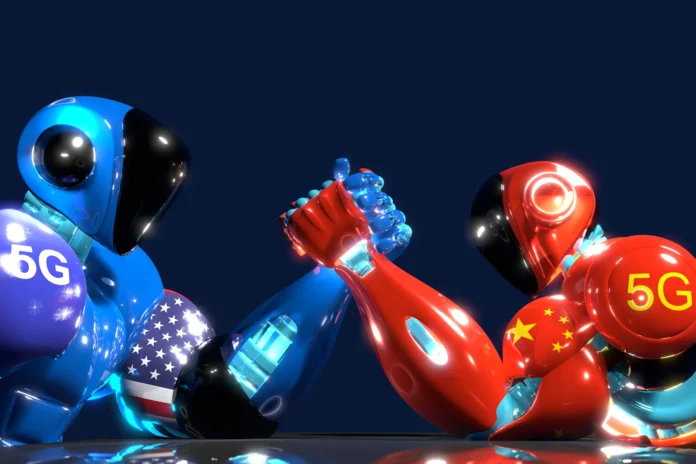

China has taken an aggressive top-down approach to dominating key technological spheres like AI, quantum computing and semiconductors. The aim is to eventually surpass the US in cutting-edge innovations critical to economic and military power.

Analysts say this national technology drive explains why Chinese researchers remain laser focused on the nuts and bolts of advancing AI rather than worrying about speculative risks.

“Dominating the discourse in the Chinese AI community are pressing questions: How can China develop the equivalent of OpenAI? Who will create China’s version of ChatGPT? Why has China not achieved significant breakthroughs despite its extensive research output?” writes Xiang.

While Beijing has established ethical guidelines and mechanisms to monitor AI risks, policies tend to emphasize safety issues that are tangible today like privacy, deepfakes and algorithmic bias.

“Existential risks have been largely set aside in these policies, which treat artificial general intelligence as a distant worry that should not distract from addressing present concerns,” says Xiang.

Human Control More Important Than Human-Level AI

Chinese academics and policymakers have stressed that while advanced general AI may one day be achieved, humans must remain in control of AI systems rather than the other way around.

This confidence in humanity’s continued dominance over silicon-based machines differentiates China from the West’s more fearful perspective.

“Contrary to AI alarmists, who worry the technology could become uncontrollable, Beijing appears confident in humanity’s ability to maintain dominance. This confidence is perhaps rooted in China’s traditional holistic, harmonious and collective perspective on the relationship between man and nature,” writes Xiang.

By establishing clear rules and monitoring mechanisms for AI development and application, China believes it can reap the benefits of AI while minimizing adverse impacts.

AI Safety as Geopolitical Strategy

While China may not lay awake at night worrying about a potential robot apocalypse, its aggressive regulatory approach serves key strategic aims.

Xiang argues that by shaping global norms around emerging technology governance, China seeks to boost its influence as a world leader in science and innovation.

“Ultimately, China perceives AI safety as a strategic tool to bolster its geopolitical influence. Its assertive approach to rulemaking is intended to establish China as a key player, potentially the most significant one, in global AI risk management,” she writes.

This competitive stance also reflects fears that rival powers could use ethically questionable AI to gain advantage, for example in mass surveillance. China has criticized US policies like the CHIPS Act that aim to slow its tech progress.

AI Safety From a Human Perspective

China’s national focus on advancing AI for economic and social benefit versus concentrating on hypothetical machine takeover scenarios speaks to differing attitudes between East and West.

“Rather than obsessing over the potential emergence of silicon-based consciousnesses, as AI doomers tend to do, we may be better off concentrating on the carbon-based lifeforms suffering the many ills of our world right here and now,” argues Xiang.

With over 1 billion people worldwide struggling with poverty, inequality, violence and food insecurity, debates on AI existential risk may seem detached from more immediate human priorities.

Others counter that advanced AI could fundamentally alter life on Earth for better or worse, so grappling with long-term implications remains valid and necessary even amid present crises.

Can East-West Cooperation Enhance AI Safety?

While the US and China fiercely compete in strategic technology, increased collaboration on AI safety and ethics may yield mutually beneficial outcomes.

Xiang suggests each side should remain open to insights from contrasting viewpoints. Overconfidence or defeatism represent opposite extremes, she argues – the answer likely lies somewhere in between.

Constructive exchange across diverse academic traditions and value systems could enhance mechanisms for governing technology wisely as rapid progress opens an array of promising and perilous opportunities.

If AI is to serve humanity rather than subjugate it, global cooperation alongside national competitiveness may prove essential in the long run.